What is Document Analytics? And how is it relevant for my business?

In the last two weeks, a few colleagues from our team participated in the Swiss Re Tech Days in London and in Zurich to speak about Document Analytics to a large crowd of reinsurance leaders and analytics professionals. There was a keen interest in the topic and we received a lot of questions around automation, document analytics and NLP in general. In this blog post, we shed some light on the field and give practical answers to the most pressing questions.

What is Document Analytics?

Document Analytics is a field of study which makes use of natural language processing (NLP) techniques in order to automate repetitive document processing tasks or to gain deeper understanding of the documents' content. Document Analytics is a key differentiator and enabler for industries where documents – some even still in paper form – are at the core of many business processes. This is the case of insurance and the financial industry in general.

What exactly is NLP?

Natural Language Processing is a sub-field of AI enabling machines to analyze, understand and generate human language, which can be in either written or spoken form. Typical applications of NLP include text-to-speech applications (e.g. virtual assistants), sentiment analysis and real time speech translation, to name a few. As far as Document Analytics is concerned, mainly text mining is of interest. Text mining is a type of analytics that identifies key concepts or entities in large quantities of documents and transforms them into actionable insights by transforming them into structured data.

Why is processing documents difficult?

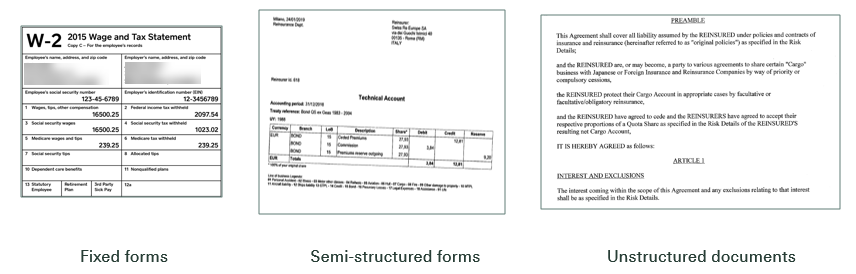

Machines are very good at processing information stored in defined structures, such as database columns or standardized formats like XML or JSON. The challenges in processing documents are multi-fold: the machine needs to be able to deal with different formats, understand the document's structure, as well as to disambiguate the actual content in order make sense of it.

Words and phrases in a document have a given meaning and given relationships to other words and phrases, which heavily depend on the context in which they occur. Consider the following example:

"I saw a jaguar in the zoo." -> animal

and

"My friend crashed his brand-new Jaguar" -> car

In addition to semantic complexity, documents can vary in structure (e.g. tables in invoices accounting documents, or free text in e-mails) and come in different technical formats (docx, pdf, xps, etc.)

One approach that we take in order to address these challenges is rule-based NLP. This allows us to build robust document analytics solutions without having to depend on large sets of annotated training data, which are often not available in industry.

How does the processing of documents in rule-based systems work?

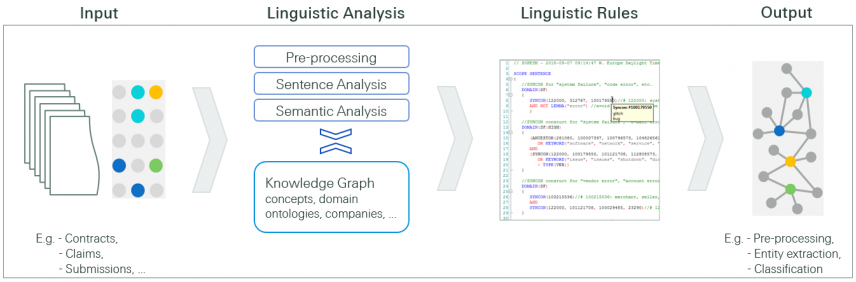

Starting from a document, the first necessary step is often to perform OCR (Optical Character Recognition), in other words, to convert a scanned image into machine-readable text. Subsequently, a complex linguistic analysis is carried out, based on different algorithms and a knowledge base, e.g. an ontology. This forms the basis for linguistic rule-writing, which leverages the information produced by the linguistic analysis. By means of these rules, we can carry out tasks such as entity extraction or document classification. Thus, the formerly unstructured data is transformed into structured data, which can be further processed.

What can or should be automated: Where do I start?

This question is difficult to answer in general, but a few key points can be taken into consideration: You either automate the processing of documents occurring in high volumes (e.g. invoices) or you focus on automation of documents whose review or processing takes a lot of manual work (contracts, technical reports etc). Good candidates for automation are usually processes which require manual data entry.

How much time can be saved using document analytics?

This really depends on the use case, i.e. the concerned documents and the type of manual task that is to be automated. Sometimes, the gain may (also) be increased quality, because instead of spending time with looking for information, users can dedicate their time to the analysis and decision-making part of the task.

Who has the final decision? Who is responsible?

The word "automation" is often misleading. Many of the automation solutions nowadays are partial automation. Just in very few cases, a full validation and subsequent decision will be taken by the system. The more common scenario in industry is that users benefit from an enriched decision support by the machine – the ultimate decision resides with the human. Another way of looking at this is "how much harm or monetary impact does the decision have?". Does the decision have a low impact (e.g. we reject the payment of an invoice because we believe it to be fraudulent) or a high impact (e.g. acceptance of a multi-million contract)?

Are there any tools you recommend using for this?

The market around document processing and analysis is very dynamic, but we recommend having a look at the following tools which we find quite promising. We use some of those in our daily work:

- ABBYY Flexicapture for custom form-based extraction

- Amazon Textract – For OCR and extraction in the cloud.

- Expert System's Cogito for rule-based NLP processing.

- Docparser.com for very simple form-based extraction

Do you have any questions around Document Analytics?

If you have some questions around Document Analytics which were not covered in this post, we would be more than happy if you could share those with us! Feel free to leave a comment below this blog post.

This article was written in cooperation by Marc Giombetti and Susanna Tron

Very interesting article, many thanks.

I’m currently working to solve a related problem on how to build symptom-failure mode relationships based on passed (unstructured) service records for the vehicle industry – we are always looking for shortcuts…

Best regards,

Kjeld Jespersen

Dear Kjeld,

Many thanks for your feedback. I would be very much interested to hear more about the problem you are facing and how you plan to build symptom-failure mode relationships. Looking forward to an exchange.

Regards

Marc

Great article, and much value for the general public!

This is not far from becoming a new standard. I am in the insurance industry and is very interesting how new markets becoming challenging for companies that are not on top of the latest trend in technology.